Our suite of real estate intelligence & AI tools for professionals (developers, appraisors, brokers) is powered by data from over 1,000+ different data sources. How we do this a scale and ensure data quality?

The challenge for our case is that we have LONG TAIL situation – many relatively easy but small data sources to maintain, for example pricing information from new projects. In contrast, e-commerce or travel industry may have leading players or shops to track for pricing intelligence.

Here are some strategies other companies may use in similar situation:

✔ Build pipelines in-house

✔ Quote for custom projects Oxylabs.io, Bright-data or other provider.

✔ Check out data marketplaces, like Databoutique.com

✔ Automate – build AI tools, which can detect patterns in product page

✔ Outsource: data as service

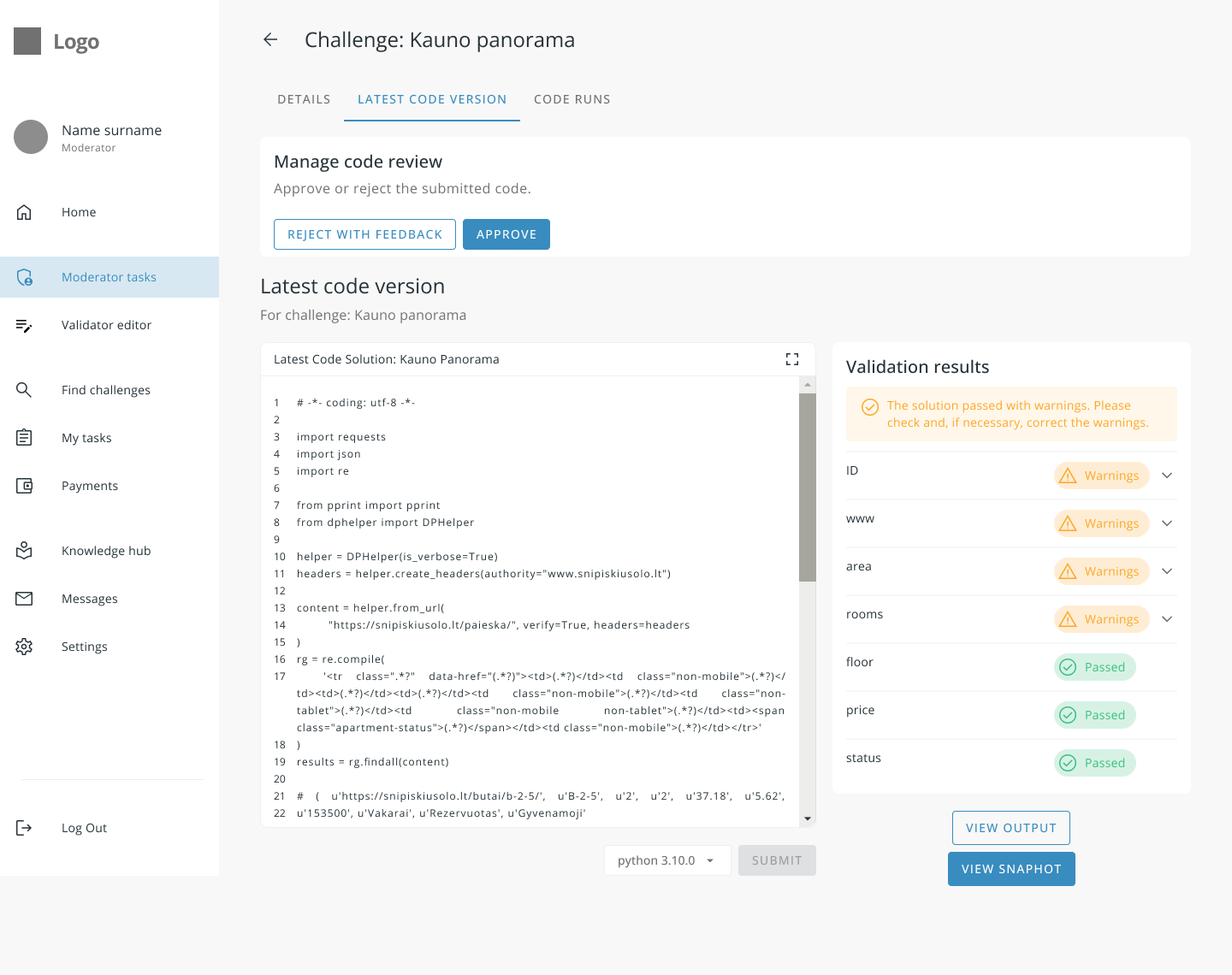

We chose an alternative strategy – created data marketplace (you can call it Fever for data), where we match businesses with data needs with data providers, while the deliverible is code (Python).

We achieved operational efficiency using this approach, as we are able to utilize people who are in progress of learning to code – these tasks are perfect fit for them to get started and landing new job. I believe that combination of AI assistance and freelancers is the future for this business.

SUCCESS STORY: as of now, product CityNow Vilnius is completely migrated to use this new data plaform, and we don’ have to touch our github repo to add new data sources, rather drop new data taks in the queue.

Reach out to us if you would like to try out data platform – wether you are starting to code, or business which needs data delivered as Excel or via API on regular basis.: info@citynow.org